1. Persist CPU.

MEMRAY developed a non-volatile computing prototype that consists of open-licensed RISC-V cores, multiple PRAM controllers and PRAM DIMMs. Persist CPU’s main memory consists of only PRAM DIMMs and both code and data exist in PRAM. Persist CPU removes all DRAM-related hardware components and software stack from the memory hierarchy and persistent control. Persist CPU eliminates physical and logical boundaries in drawing a line between non-persistent and persistent data structures by guaranteeing both data and execution persistence. Data copy between memory and storage that hibernation and checkpoint-restart require to support persistency is not expected. Existing applications do not require any source- level modifications to take advantages of Persist CPU. In addition, Persist CPU’s contiguous execution environment can remove checkpoints and external power sources, such as uninterrupted power supplies and battery/capacitance backed non-volatile memory.

Persist CPU prototype guarantees on-the-fly data persistence and secures execution persistence.

Persist CPU realizes instant Power off and Power on, which is an OS-level lightweight orthogonal persistence to quickly convert the execution states to persistent information in cases of a power failure. Specifically, it provides a single execution persistence cut where multiple cores can make sure their states in persistence upon a power failure event. Later, it almost-immediately executes all the stopped user and OS kernel process tasks on the processor from the previous persistence cut if the power is recovered. This feature significantly saves current computing’s power budget and overhead cost for persistence management.

No need for Checkpoint-Restart.

In current computing, especially in huge data centers and HPC, Checkpoint-Restart is very widely adopted. To prevent huge data loss from power failure or any power related events, Data centers repeatedly back up the GB, TB size of data redundantly. The power used for this redundant but inevitable process takes up to 60% of total power budget. Only 35~40% of power is actually used for data processing work load. Eradicating this redundant procedure and related overhead, Persist CPU saves at least 60% of power budget and related overhead budget, and also enhances the computing performance.

Performance of Persist CPU

Persist CPU demonstrates MEMRAY’s PRAM Controller performance in a real computing system.

* Computing organization is the same with Persist CPU, but employs DRAM and SSD rather than PRAM.

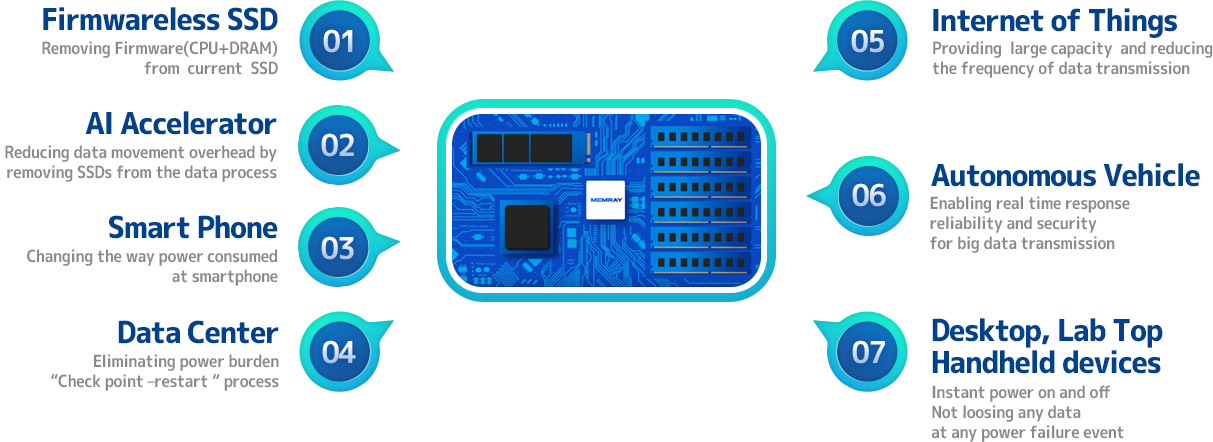

2. Firmwareless SSD

Removed Firmware(CPU+DRAM) from current SSD

- Removed the most costly and the most power consuming operations in SSD

- Shows much better bandwidth and latency and lower power consumption

The design of efficient firmware takes a key role of modern SSDs, but we believe that firmware operations can be a burden for the fast PCIe storage cards in the near future. Specifically, as the access latency of new memory is now around a few microseconds, the firmware executions become a critical performance bottleneck.

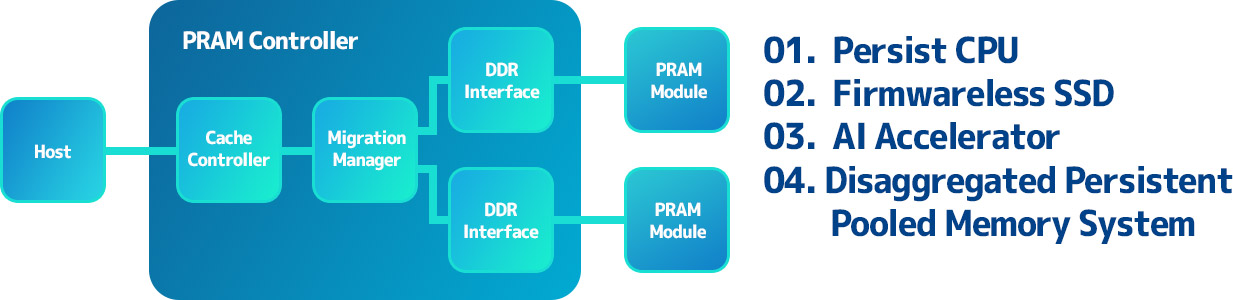

MEMRAY proposes Firmwareless SSD that removes the internal processor and DRAM buffer resources from state-of-the-art NVMe SSD architectures by fully automating all new memory processing components over hardware. Specifically, Firmwareless SSD converts all storage management logic for new memory into pipelined hardware modules. Firmwareless SSD directly reads or writes host-side data to underlying backend storage media without internal DRAM caches by employing PRAM controller to perform I/O services directly upon bare PRAM packages. Firmwareless SSD exposes this PRAM backend complex to the host through PCIe links. MEMRAY prototypes Firmwareless SSD on our non-volatile computing platform, employing massive numbers of PRAM as representative of new memory technologies.

Firmwareless SSD proves that performance of SSD can be greatly improved and energy consumption can be reduced by removing computation resources like CPU and DRAM.

* Microbench of Firmwareless SSD is normalized to Firmware SSD which is implemented based on firmware execution.

3. AI Accelerator

Reduced Data movement overhead by removing SSDs from the data process.

- The data is stored in the accelerator’s large capacity of PRAM memory instead of host’s storage (SSD).

- The accelerator directly accesses the data in the PRAMs and executes data processing.

- AI accelerator gives better computation performance and consumes less energy than conventional AI accelerators

The well known issue behind FPGA-based hardware acceleration approach is a limited storage capacity to accommodate all input/output data with the accelerator’s on-chip memory. In cases where the data do not fit with the internal on-chip memory, it introduces a host overflow that requires often transferring data between the host and FPGA board.

MEMRAY proposes AI Accelerator that realizes a scalable heterogeneous deep learning accelerator. AI Accelerator realizes an FPGA-based domain specific architecture for DNNs, but exploits a large storage space of phase change memory (PRAM). Specifically, our AI Accelerator integrates a general processor, systolic-array hardware, and PRAM controllers into a single chip fabrication. AI Accelerator connects an on-board PRAM backend complex that can contain many PRAM modules at the FPGA-side as working memory. The systolic-array hardware accelerates deep learning operations in particular general matrix multiplication (GEMM) and convolution. In parallel, the PRAM controllers and backend complex address the limited on-chip memory capacity issue, imposed by modern accelerators. MEMRAY prototypes AI Accelerator on our non-volatile computing platform and exposes it to the host over PCIe.

AI Accelerator minimizes the host overflow by storing large machine learning data in the accelerator’s non-volatile PRAM memory that provides larger memory capacity than a conventional DRAM. AI Accelerator gives better computation performance and consumes less energy than conventional AI accelerators, which is owing to minimization of host overflow.

4. Disaggregated Persistent Pooled Memory System

Broke processor-memory dependency and provided huge non-volatile memory space.

- Computing nodes can use the persistent pooled memory as their main memory, instead of local DRAM memory.

- Integrates many PRAM modules for huge non-volatile memory space and manages cache coherence.

The demand for large memory space increases significantly in modern computing system. To increase the memory space in a conventional system, the number of processor should be increased too. This raises total costs of ownership (TCO) for larger memory space. If the memory can be disaggregated with processors, TCO can be reduced for larger memory space.

MEMRAY proposes disaggregated persistent pooled memory system that integrates many PRAM modules for huge non-volatile memory space and manages cache coherence between computing nodes and the persistent pooled memory system. Specifically, our persistent pooled memory system can be connected to multiple computing nodes through PCIe switch and provides shared memory space for the computing nodes. The persistent pooled memory system can be used as main memory for the computing nodes, which can remove the processor-memory dependency. Our persistent pooled memory system manages cache coherence between the multiple computing nodes and the persistent pooled memory based on our cache coherence protocols that optimized to the property of PRAMs, which is longer write latency compared to DRAMs.

Currently, our disaggregated persistent pooled memory system is under development. First prototype will be available by end of Dec. 2020. The prototype will be evaluated with RISC-V computing nodes.